ETH develops chip against deepfakes

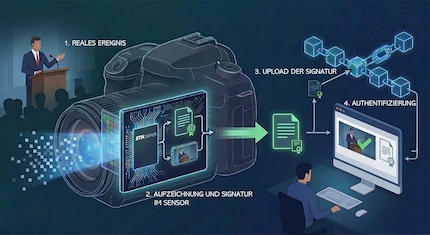

A project at ETH Zurich is adding a cryptographic signature to photos and videos at hardware level. This should later be able to be read in a decentralised manner. This would allow platforms to automatically verify genuine recordings.

Fake videos and images of politicians, celebrities or the Pope: deepfakes are no longer a technical gimmick, but a business model for fraud and a risk to democracy. Misinformation and disinformation are on the rise, while countermeasures can barely keep up. As a result, many people no longer trust even credible sources.

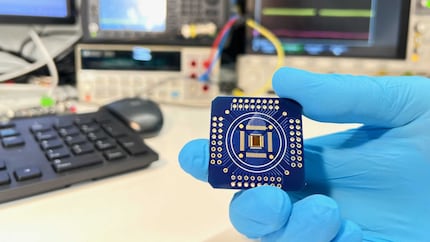

Researchers at ETH Zurich want to counteract this problem with a hardware solution. They have developed an image sensor that cryptographically signs its data directly in the chip, making subsequent manipulations visible. Genuine images can be verified by anyone and everyone.

Decentralised verification via blockchain

The prototype not only generates the image data for a video recording, but also a digital signature. This is permanently linked to the respective sensor. It documents which chip the data originates from and when it was created. Any subsequent changes to the image material would invalidate the signature. In order to create a deepfake that passes as «genuine», attackers would have to compromise the hardware itself - i.e. physically attack the chip. This effort would be so high that counterfeiting would become economically unattractive for social media.

Source: Felix Franke / ETH Zürich

Camera manufacturers could store the signatures generated by the chip in a public register on a blockchain basis. Platforms or verification tools compare the signature of the file with the register during upload or verification. If it matches, the content is considered an authentic recording. If the entry is missing or does not match, this is a warning signal. The chain of trust should therefore shift away from platforms and software towards hardware.

Current detection methods usually look for fakes based on traces of AI generation in the image - such as unnatural artefacts or inconsistent lighting conditions. In contrast, the ETH approach does not work with probabilities, but with reliable proof of origin. For platforms such as YouTube or social networks, which are already testing deepfake reporting systems for politicians and journalists, the chip could automatically verify content.

For platforms such as YouTube or social networks, which are already testing deepfake reporting systems for politicians and journalists, the chip could automatically verify content.

Deeper integration than previous approaches

The sensor is still a prototype. The concept works for any type of sensor, from cameras to smartphones. For widespread use, manufacturers would have to adapt their devices and agree on common register standards. Whether this is realistic remains to be seen. Manufacturers such as Sony, Canon, Adobe and Microsoft have so far taken a software-based approach to verifying the authenticity of photos: C2PA is an open standard for the cryptographic signature of metadata. Some cameras already support it.

Source: Caroline Arndt Foppa / ETH Zürich

The ETH solution goes deeper. It transfers the generation of the signature to the chip and thus links the real event with the digital file at hardware level. Raw data and signature are inextricably linked. The second difference lies in the idea of the decentralised register. This means that it does not matter whether a company involved in the processing or transmission of the data is trustworthy.

My fingerprint often changes so drastically that my MacBook doesn't recognise it anymore. The reason? If I'm not clinging to a monitor or camera, I'm probably clinging to a rockface by the tips of my fingers.

From the latest iPhone to the return of 80s fashion. The editorial team will help you make sense of it all.

Show all